Article by Craig Macadam, Conservation Director, Buglife

Every year, millions of insects are collected, photographed and identified by entomologists around the world. Yet, with over one million known insect species and an estimated four to six million more awaiting discovery, even experienced entomologists can spend hours puzzling over a single specimen. The shortage of taxonomic experts compounds this challenge, creating the so-called ‘taxonomic impediment’ – a bottleneck that slows biodiversity research and conservation efforts worldwide.

This is where artificial intelligence (AI) comes in. Over the past decade, machine learning algorithms have been developed that can recognise patterns in images with remarkable precision. If these systems can distinguish between human faces or identify objects in photographs, researchers reasoned, perhaps they could learn to tell a beetle from a butterfly, or even distinguish between closely related species that look nearly identical to the untrained eye.

The core technology behind these systems is the convolutional neural network, a type of artificial intelligence that processes images in layers, gradually learning to recognise increasingly complex features. Much like a student learning entomology, the system starts by noticing basic shapes and colours, then progresses to more sophisticated features like wing venation patterns or the curve of an antenna. The crucial difference is speed: what might take a human master in hours when given sufficient training data.

So, how well does AI identify insects?

For this article I’ve tested five readily available AI engines.

- Obsidentify is a specialised insect identification application that uses AI to help users identify various insect species through photographs. The app allows users to take pictures of insects they encounter and receive identification suggestions, together with a percentage rating of the identification based on visual analysis.

- Apple has integrated AI capabilities into iPhones that allow users to identify insects through the device’s native assistant. By activating Siri’s visual lookup feature, users can take or select photos of insects and receive identification information directly through Apple’s ecosystem. This functionality is built into iOS devices, eliminating the need for separate app downloads.

- Google Lens is Google’s powerful visual search tool that can identify insects amongst countless other objects, plants and animals. Users can point their camera at an insect or upload an existing photo, and Google Lens will analyse the image and provide identification results along with relevant web information about the species.

- iNaturalist Seek is an educational app designed to encourage exploration of the natural world, with strong capabilities for insect identification. Developed by the California Academy of Sciences and National Geographic, Seek uses image recognition technology to identify insects in real-time through the device’s camera.

- Insect.id is a dedicated identification app that focusses specifically on the world of insects, offering specialised AI-powered recognition for various insect orders and species. The app allows users to photograph insects and receive detailed identification results, often including information about the insect’s taxonomy, distribution and ecological role.

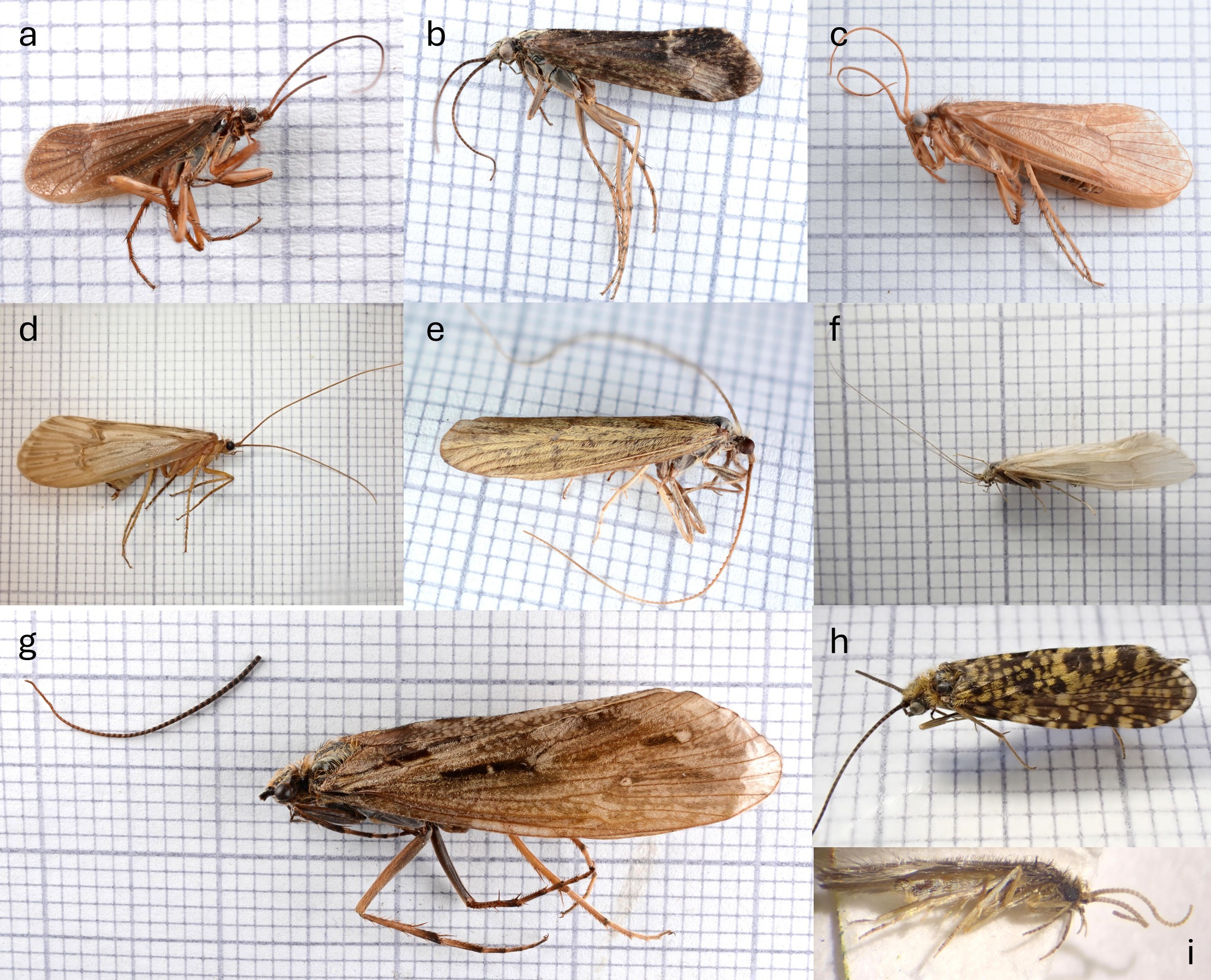

Figure 1.

Figure 1.

Images of live specimens used: a) Glyphotaelius pellucidulus; b) Limnephilus flavicornis;

c) Potamophylax latipennis; d) Limnephilus marmoratus; e) Rhyacophila dorsalis ; f) Limnephilus

lunatus; g) Agrypnia varia; h) Limnephilus auricula; i) Polycentropous flavomaculatus. Credit:

Craig Macadam

I focused testing these apps on images of caddisflies (Trichoptera) collected while light trapping in central Scotland. I used images of both live and dead specimens (Figs 1 and 2) and compared the number of correct identifications at family, genus and species level.

The results are shown in Table 1 at the bottom of this page.

So how did they do?

Figure 2.

Figure 2.

Images of dead specimens used: a) Chaetopteryx villosa; b) Limnephilus

sparsus; c) Micropterna lateralis; d) Halesus radiatus; e) Odontocerum albicorne; f)

Oecetis ochracea; g) Phryganea bipunctata; h) Philopotamus montanus; i) Oxyethira

flavicornis. Credit: Colin Legg.

The clear leader was Obsidentify, achieving 94% accuracy at family level, 78% at genus level and 61% at species level in the overall assessment. At the opposite end of the spectrum, iNaturalist demonstrated weaker performance, correctly identifying only 22% of specimens at species level overall, though it managed 67% accuracy when restricted to family-level identification.

Insect.ID matched Obsidentify’s species-level performance at 61% overall, whilst Apple Siri and Google Lens both achieved 28% accuracy at species level. However, these applications showed differing strengths at higher taxonomic levels, with Insect.ID reaching 83% at family level compared to 67% for both Siri and Google Lens.

The distinction between live and dead specimens proved important for most applications tested. Obsidentify and Insect.ID demonstrated the most dramatic decline in identification ability, dropping from 78% species-level accuracy with live specimens to 44% with dead ones. Conversely, iNaturalist maintained its modest 22% species-level accuracy regardless of specimen condition, suggesting either robustness to morphological changes or a consistently limited capability. Apple Siri and Google Lens showed relatively minor fluctuations between conditions, with Siri actually improving slightly from 22% to 33% at species level when identifying dead specimens.

The marked superiority of Obsidentify across nearly all categories suggests a fundamental difference in either training data quality, algorithmic sophistication or both. In contrast, the consistently poor performance of iNaturalist is puzzling, particularly given the platform’s reputation and extensive community-generated database. One possible explanation lies in the distinction between the platform’s community-driven identification process, which involves human experts reviewing submissions, and its automated computer vision component, which was tested in this trial.

One potential method of improving identification accuracy lies in the provision of location data as a constraining factor in the identification process.

iNaturalist, Google Lens and Apple Siri all frequently suggested North American species in this trial despite the specimens being photographed in the UK. The simple addition of location details would most likely improve the results for these apps.

The practical implications of this trial are clear. For citizen scientists and amateur naturalists, Obsidentify and Insect.ID offer genuinely useful capabilities, correctly identifying approximately three in every five specimens to species level under typical conditions. However, this 60% accuracy rate, whilst impressive for automated systems, remains insufficient for scientific applications requiring definitive identification.

Biodiversity surveys, invasive species monitoring and conservation assessments cannot rely solely on these tools without expert verification. Field naturalists photographing living organisms can expect the best results from most applications, whilst researchers working with museum collections, light trap surveys or malaise trap samples might want to stick to more traditional identification methods.

This is a small study covering only UK caddisflies. It is possible that with more samples or other insect groups, the relative performances of the different platforms would change. Despite the limitations revealed by this evaluation, there remains considerable cause for optimism regarding the future of AI-powered species identification.

The technology has advanced remarkably in recent years, and applications like Obsidentify demonstrate that accuracy rates approaching genuinely useful levels are already achievable. As training datasets continue to expand and diversify, incorporating millions of verified specimens across broader taxonomic and geographical ranges, and as algorithms become more sophisticated in their ability to integrate morphological features with contextual information such as location, habitat and seasonality, we can expect substantial improvements in performance. The potential of these tools is profound: they offer the possibility of empowering countless citizen scientists, students and nature enthusiasts to engage more deeply with the biological diversity around them, transforming casual encounters with wildlife into opportunities for learning and contributing to scientific knowledge. Whilst AI identification will likely never entirely replace the nuanced expertise of trained taxonomists, it need not do so to be transformative. If these applications can reliably narrow identifications to a handful of candidate species, provide educational information about those possibilities and flag cases requiring expert verification, they will have succeeded in making the natural world more accessible and comprehensible to a generation eager to understand and protect it.

Acknowledgements The author would like to thank Colin Legg for the images of dead specimens.

Table 1. Results from each of the apps tested.

Live specimens

| Live specimens | Obsidentify | iNaturalist | Apple Siri | Google Lens | Insect.ID |

| Family | 100% 67% 67% 78% 100% | | | | |

| Genus | 89% 44% 56% 67% 89% | | | | |

| Species | 78% 22% 22% 33% 78% | | | | |

Dead specimens

| Dead specimens | Obsidentify | iNaturalist | Apple Siri | Google Lens | Insect.ID |

| Family | 89% | 67% | 67% | 78% | 67% |

| Genus | 67% | 22% | 56% | 44% | 44% |

| Species | 44% | 22% | 33% | 22% | 44% |

Overall

| Overall | Obsidentify | iNaturalist | Apple Siri | Google Lens | Insect.ID |

| Family | 94% | 67% | 67% | 78% | 83% |

| Genus | 78% | 33% | 56% | 56% | 67% |

| Species | 61% | 22% | 28% | 28% | 61% |

Thank You for 50 Years

Reaching 50 years is a testament to the enduring value of Antenna and the strength of the RES community. Thank you to everyone who has contributed, subscribed, shared photography, written, illustrated or championed the magazine over the decades, and to you, our Members and Fellows, for continuing to support insect science and communication.

Antenna remains a unique space where science meets storytelling – and we’re excited to share the next chapter with you.

Are you a RES Member or Fellow?

Log in to your membership account to request a hard copy of future volumes of Antenna, gain access to significant discounts on handbooks and registration to RES events, and many more exclusive benefits.

Not a Member or Fellow?